In 1993, Richard Cleave (R. L. W. Cleave) wrote The Holy Land: A Unique Perspective, which to my knowledge (and as the book jacket says) represents the first time satellite imagery was directly used as a base layer for Bible maps. He writes that his source is a Landsat 5 image from January 18, 1987: “a cold, exceptionally clear and almost cloudless morning: the best of all possible mornings for a single contemporary image of the whole area.” He uses this image throughout the book and for his two-part Holy Land Satellite Atlas in 1999, which in turn serves as the basis for the NET Bible Maps (2003).

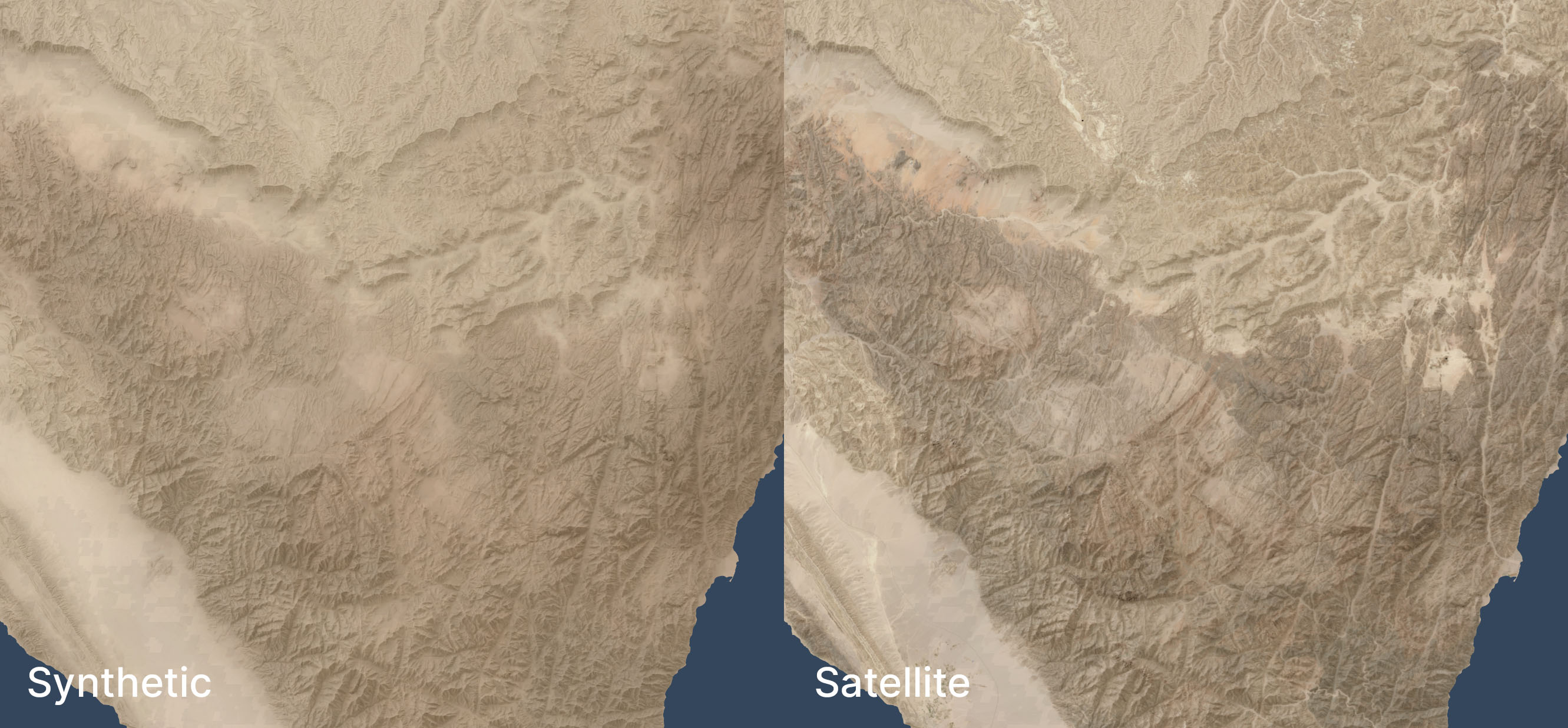

The U.S. government makes decades of Landsat imagery available, so I was curious whether it was possible to approximate Cleave’s classic look using modern methods. The answer is, “Yes, mostly”:

If you’ve worked with satellite imagery, you know that the data comes in “bands”—in this case, there are red, green, and blue bands—that you combine to make a final image. The decisions you make when combining these bands dramatically affect the look of the output, and there’s no objectively correct answer. I tried to come close to Cleave’s decisions from the early 1990s, but my water ended up darker and my highlights ended up brighter than his. It has a similar feel, though, down to the purple tones south of the Dead Sea. Making my matching life harder, the print colors of Cleave’s image vary depending on the book, which suggests either printing variations or multiple rendering refinements. So I tried to capture the character of the original, but it’s more of an interpretation than a copycat.

About Richard Cleave

Cleave himself sounds like a fascinating fellow. Robert North in A History of Biblical Map Making describes him in 1979: “Dr. R. L. W. Cleave of the British Navy, after serving hospitals in Jordan and becoming concerned with the lack of aerial survey material of the Holy Land, resigned his commission to accept the offer to prepare a pictorial archive for a Time-Life project. When the 1967 war intervened, he was limited to working inside Israel, and with the guidance of Père Jean Prignaud of the École Biblique he prepared and published 1500 aerial views of all major archeological and geographical features of Cisjordan. To these have already been added some 500 more views of Sinai, Göreme, and some other sites mostly in Turkey” (p. 142).

His photos consistently appeared in Bible reference works from 1967 through the late 2000s and remain high quality even compared to today’s imagery—especially since they capture a world from 60 years ago. His aerial view of the City of David represents, to me, one of the clearest ever captured. Compare a similar perspective from 2014, which shows many more buildings and is harder to parse at a glance.

Cleave worked with James Monson to produce the Student Map Manual in 1979. The ambition described in this book’s preface is astonishing for the time. Cleave’s “Wide Screen Project” describes an entire geographically indexed multimedia learning system: for the audio, cassettes; for the visual, audio-synchronized slides plus maps; for learning, the Student Map Manual, guided tours, and a poster exhibit. This proposed learning system provides a practical use for his library of thousands of photos.

In 1993, he combined 149 of these photos along with the aforementioned satellite view into The Holy Land: A Unique Perspective. The afterword to this book is also ambitious: he describes the now-common (thanks to Google Earth) practice of draping satellite imagery over a digital elevation model to produce a 3D view.

Cleave and his son Adrian worked with “John K. Hall of the Israel Geological Institute, and Gennady Agranov and Craig Gotsman, computer scientists at the Technion, Haifa” to produce these 3D images, which would premiere in National Geographic‘s June 1995 issue (“Satellite Revelations: New Views of the Holy Land“) and later form the core of 1999’s The Holy Land Satellite Atlas as part of RØHR Productions, Ltd. (Nicosia, Cyprus). In this 3D imagery, he uses SPOT panchromatic data to add detail (similar Landsat 7’s panchromatic band).

This work required an international team in 1993; today you can (approximately) recreate it on a home computer. Including satellite imagery in Bible maps has become somewhat more common but remains unusual. Some of Tyndale’s current maps use a subtle satellite background. The Satellite Bible Atlas (2013) relies on satellite imagery for its whole premise. The Casual English Bible maps use 3D satellite images.

But Cleave wasn’t just thinking 3D in 1993; by adding a time dimension, he was thinking 4D:

Rohr Productions is now preparing a 2 1/2 hour videotape of 3D satellite animation, specifically designed for use with this atlas. This will have a 20 minute Introduction and 13 Regional Segments, each of approximately 10 minutes duration…. The spoken commentary in the video will be descriptive, designed to reinforce the regional commentary printed in the book.

Relevant low-level aerial photographs (selected from the book) will be inserted into the “flight path,” providing familiar details of the major Biblical/historical sites and geographical features, each presented in its appropriate regional context.

Therefore all three of the most important elements in the atlas will be fully represented in the videotape: viz. the regional commentary, satellite imagery and low-level aerial photography. The videotape will provide optimal visualization and the book optimal documentation. To be fully effective, both systems are necessary.

This system anticipates multimedia accompaniments to books. He also describes using a CD-ROM to provide interactivity in a way that didn’t become popular until thirteen years later, with Google Earth’s release in 2005. The technology that underlies Google Earth didn’t even exist until 1999, at least six years after he wrote this paragraph:

In the case of the above videotape of simulated flights over the Holy Land, the actual flight paths have been predetermined for use in conjunction with the regional satellite maps in the atlas. Thus the viewer cannot alter these animation sequences in any way. Such personal intervention or “interactivity” is only possible if the 3D satellite data is supplied in digital format (on CD-ROMs), for use on the computer. Such use is already possible, of course, but only on the more powerful graphic work stations. We must still wait for comparable processing power and storage capacity in the PC world to provide this interactive option to a much wider group of Bible students, but it cannot be more than a few years away!

Cleave would ultimately produce this software. You can see some videos of a later version of it in use on YouTube. The effect is similar to Google Earth’s “tour” feature (which, again, came out more than a decade later). Here’s my recreation of the effect in Google Earth using the above image.

In all these cases—from aerial photos to multimedia education to satellite imagery to 3D views to 4D presentations to interactive explorations—Cleave saw the technological possibilities of the time and explored what they could mean for students of Bible geography.

What happened to the thousands of photos that Cleave took in the 1960s, though? Based on the hundreds he printed in his books and licensed to others, they’re very high quality and are an important historical record. Some of his posters and 3D satellite imagery remain available online (for now) in low-resolution forms, but I couldn’t find a repository of his photos. Maybe they live on as slides in a collection somewhere, waiting to be digitized and made more widely available. Until then, you can buy his books used or browse some of them on the Internet Archive.